By any sort of measure It has now been twenty years since this site has been up, and a lot has changed. When I “launched” this site (a pompous way to say I cobbled it together and got it online), I was in telecoms and trying to escape the world of corporate IT by using the Mac as a UNIX workstation at home1.

Then that gradually took over my computing life and I used the Mac everywhere until the pendulum swung back (or sideways, really) and I went to work on cloud computing.

It’s been 20 years, and I’m now at Microsoft. Back working in telecoms, as it happens, and back using Apple platforms (mostly the iPad now, really) as a way to keep my personal computing environment as separate as possible from work.

But everything else has changed almost beyond recognition.

The Mac

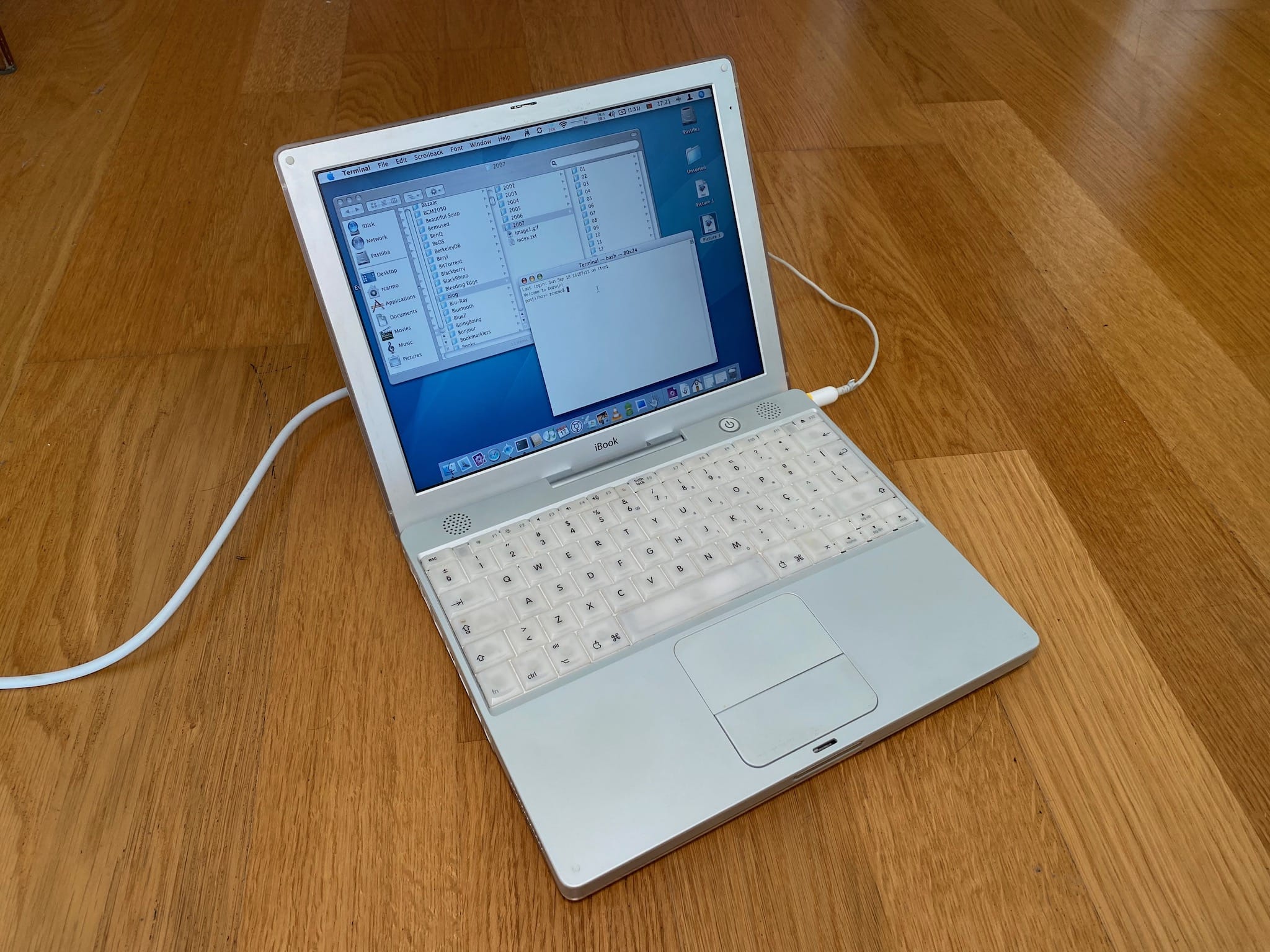

Here’s the machine that, in more ways than one, started this site:

I had some history with the Mac before getting that iBook. In fact, now that I think about it, I had the good fortune of experiencing all the Mac’s major technology transitions since the Motorola 680x0 Macs I used at college before moving on to a NeXTCube and later to the PC world, “switching” back during the PowerPC years.

The Intel transition was a roller-coaster ride, but nothing compared to Apple Silicon. That has not just fixed most of my hardware gripes (noise, heat and performance) but also neatly converged with many years fiddling with ARM architectures on the side, so I’m pretty happy about the whole thing, and I think Tim is too:

(at least Stable Diffusion agrees)

With a second generation of chips already out and what seems to be a coherent strategy going forward, Apple hardware seems to be in relatively good health these days.

macOS and Native Apps

Software, however… well, not so much. The past 20 years have not been kind to a platform that prided itself on polished user experience, since “thanks” to web technologies UX quality has eroded to a point where companies think Electron-based applications are an acceptable thing to ship.

This is both good and bad because while it allows Mac users to use the same software as their Windows (and Linux) counterparts, the quality of the experience has consistently worsened.

Coupled with the trend towards cloud services (more on that later), this shift towards mass-market web sites and low-quality web-style apps has effectively imploded the indie developer scene–whereas a few years back I would have literally dozens of tiny, high-quality shareware apps installed on my Mac, these days I have no more than a handful.

Part of this might also be due to notarization and the Mac App Store, but the fact remains that most of the desktop applications I use on my Mac (aside from Office) are either from small niche studios or by Open Source projects–and of the latter, pretty much nobody uses Swift or any native Apple framework, so Apple’s ability to drive any kind of sustainable developer ecosystem on the desktop seems pretty much gone.

As a side note, the lack of fully native desktop apps also means that old-style desktop automation is pretty dead, but that is probably worth a full post in itself as soon as we can figure out what Shortcuts can actually do in macOS.

The biggest challenge I see for the Mac these days is thus not hardware, but software differentiation, as well as a still embarrassing lack of QA testing, often deep below the surface.

It is all fine and good to still have great UX and a stable desktop, but for those of us who care about the underlying OS, why should you use macOS when there are still discussions about it wearing out your SSDs early, various performance regressions after upgrades (upgrading to a macOS point release, not a fresh major one, is still considered to be the sane thing to do if you rely on your Mac for a living) and a multitude of paper cuts for those trying to use the UNIX userland?

I’m an extremely tolerant user (remember I use Windows and Linux as well on a daily basis), but even the deep hardware and software integration that Apple Silicon has reinforced starts wearing thin when I have to deal with slightly broken system services–which is why I’ve actually set my sights on Linux (of all things) as a likely end game for all my desktop computing.

And going back to software differentiation, consider this: Linux is now “good enough” that I can buy a mid-range laptop and have it “just work” for 99% of what I do (with 99.5% complete hardware support, too), and I can use largely the same kind of applications I need to use on the Mac.

Not as good, not as polished, but good enough, and I am not even going to discuss Windows…

The iPhone, iPad and iOS

But as it happens, the Mac has actually become the Apple platform I use the least. iOS, in multiple shapes and forms, has taken over both Apple’s priorities and my own daily affairs.

I usually say that the iPad is “my most personal computer”, and my iPad Mini 5 is still the first device I pick up in the morning and the last I put down in the evening. It feels somewhat like the Newton writ large, and I would say that has been the biggest change in computing experience for me over the past 20 years.

Yes, the iPhone was a tremendous breakthrough. It shaped a lot of my career in telecoms, spawned entire new industries, and redefined the entire smartphone category (Nokia and Blackberry being notable casualties that I worked with closely).

But on a personal level, it was the iPod Touch that got me into reading news and posting links over breakfast even before we had the iPhone in Europe, and it was the iPad that carried that habit forward and afforded me a good enough balance of power and flexibility until I was writing (and developing) most of this site on it–even if it took Apple years to acknowledge people wanted keyboards, mice and pens to work.

And thus we come to the iPad Pro, which is almost the modern day equivalent of that iBook (vertically integrated hardware and software, very different from equivalent devices and with a good application ecosystem), but with a key difference–it is fundamentally kneecapped by its operating system and Apple’s reluctance in having a continuous functionality spectrum across its computing platforms.

I expect M2 iPads to come forth in a few weeks (or months), but I don’t expect them to be fundamentally different or groundbreaking from a platform perspective.

Oh, there will be some unique features for sure (possibly annoying people who, like me, just got an M1 Pro), but I am at a loss to see how they might be revolutionary. iPad OS 16 is likely to help, although what I’ve seen of both Stage Manager and the latest betas is still, quite frankly, sub-par.

The iPad Pro deserved proper external display support from the outset, and the affordances made towards a “normal” document-oriented workspace (with the lackluster Files app still dealing poorly with remote filesystem and the various storage providers forcing users to jump through hoops to actually get at other applications’ sandboxed data) are definitely not what I expected things to evolve towards.

Although I am effectively wedded to iOS on my phone (more on that later), I would like to believe iPad OS is going to become something like a “full” operating system (or at least become more functional than, say, a Chromebook, regardless of the application ecosystems).

Only time will tell.

The Watch

I won’t say much about the Apple Watch other than I actually sleep with one and it only leaves my skin for a couple of hours every day while I shower and breakfast.

I wear one for health reasons (sleep and heart monitoring are something I need quantitative data on), convenience (it is a great way to use Siri and HomeKit for home automation) and very selective notifications, and having tried a lot of wearables over the years, I really don’t think any of the other smartwatches stack up even though I am not exactly thrilled with new models.

The reason I mention it here is that it is the one bit of Apple tech that really drives home the point that the future we now live in would be extremely hard to predict twenty years ago–having more computing power on my wrist than one of the VAX machines that ran my college labs is… fun.

The Cloud/Subscription Economy

Going back to software, the big change that has helped the Mac reach functional parity with other systems has been the switch towards software as a service. The web eroded away most platform differences, and Safari made it possible for Mac users to be first-class citizens in a new world of centralized, metered software.

It is pretty much impossible these days to use anything that is neither accessed via a browser nor tied to some form of cloud service, which companies see as a way to provide more features, sure, but also as a way to justify charging a subscription fee (I’m looking at you, 1Password).

And over these 20 years, those services wrought profound changes in the way we work. Dropbox was the first game changer in that regard since it was (originally) data- and platform-agnostic. Overnight, I stopped needing to ferry a laptop between work and home and could rely on all my files (documents and code) just being there.

It made a mockery of the “edit stuff on a file share over VPN” lifestyle, and let me work from any machine–including my Mac. But it did not lock my data in, or specify arbitrary limits as to how many documents I could have. Neither do OneDrive or SyncThing.

My stance on cloud services is pretty simple: I won’t use anything that won’t allow me to get my data out, and that won’t work across at least Mac, iOS and Windows.

As an example of how arbitrary and closed some of these modern apps tied to cloud services can be, take Fusion 360 or Shapr3D, two apps I would pay for in an instant if their pricing and data management models made sense.

You can export some of your data, but “documents” are not available as discrete streams of bits you can copy around–their native forms live almost entirely on the server, and you are artificially limited in the number of projects you can have (or keep private) at any one time.

This, together with subscription overload, is nickel-and-diming users to an almost unethical degree, and even accounting for how the relationship between workspaces, applications and files (and file systems) has changed over the years (with many kids famously resorting to search due not really knowing or understanding where their files are stored), it is an approach I will never endorse, and has actually pushed me back into Linux and Open Source solutions for my own stuff.

The Raspberry Pi

Twenty years is a very, very long time for a lot of other things to evolve, too, and my penchant for hardware meant I paid a lot of attention to small computing platforms.

That is partly why started dabbling in ARM devices by hacking the NSLU2 (which, amazingly, actually ran this site at one point), and why I own pretty much every single revision of the Raspberry Pi–it has been the IoT equivalent of the original IBM PC reference design, and it has been as important to me in the past few years as Apple devices.

I tried a few other platforms (like the ODROID and the Banana Pi I wrote about only last week), but the Pi is just overwhelmingly “normal” and well-supported.

(and no, Stable Diffusion has no clue what a Pi actually looks like...)

I have Pis running my home automation setup, inside synthesizers, running sensors of various kinds, and am even revising this on one2. They are impossible to buy right now, but I am as excited by the way they have brought the ARM platform into an admittedly niche, but popular, part of computing.

Android

ARM devices and my telecoms work were the reason I invested a lot of time in Android during the past few years, even to the point of doing custom builds for things like the Portuguese Classmate PC and multiple phones I had over the years.

Although I do not care much for it on phones (I can use it, but not live with it), I do like seeing it on all kinds of platforms (from emulation consoles to TV set-top-boxes), and somewhat regret that it is still vastly easier to implement anything I want on Android than on iOS–no developer fees, no awkward limitations as to what you can install on your own device, and, above all, developer documentation that did not leave me guessing (and bear in mind that I knew my way around Mac development since the OS 6 days).

Anything from watch faces to entire digital signage systems was, at most, a couple of hours away from having a usable prototype, and at one time I actually entertained the thought of it becoming a decent alternative to desktop software (although, ironically, one only slightly less kneecapped by its creators than the iPad).

It is certainly flexible enough to do most of what people typically need and more, so it was hardly a surprise for me to see it leading the VR space, which is definitely something I could not anticipate 20 years ago.

It also bears mentioning that even though I have a couple of Apple TV boxes and love them, I’ve gone back and forth between them and devices like the NVIDIA SHIELD and the Mi Stick so often that I’m pretty sure Apple is losing big outside the phone and tablet space–Android is just everywhere, and gets things done with less hassle (even if it still lacks polish).

I know that Apple focuses on doing a few, highly profitable things extraordinarily well, but there is so much they could have done better…

This Site

Finally, a few words on the site itself: I don’t have any specific plans for it, in much the same way as I didn’t when it started.

Over the past 20 years, my readership (and I thank you profusely for being one if you’ve made it this far) has come and gone–well, mostly gone, really, as more and more people took more and more time to speculate online about Apple rumors and I slowly drifted away from the bazaar.

But it is still very rewarding to get the occasional e-mail regarding something I’ve written, typically about some technical detail I posted or linked to that helped people out.

I have had the usual amount of offers for the domain (especially during the heyday of Mac rumor sites) as well as a few professional conversations where I would have to either take it down or stop posting (you can probably guess at those, but I’m not telling).

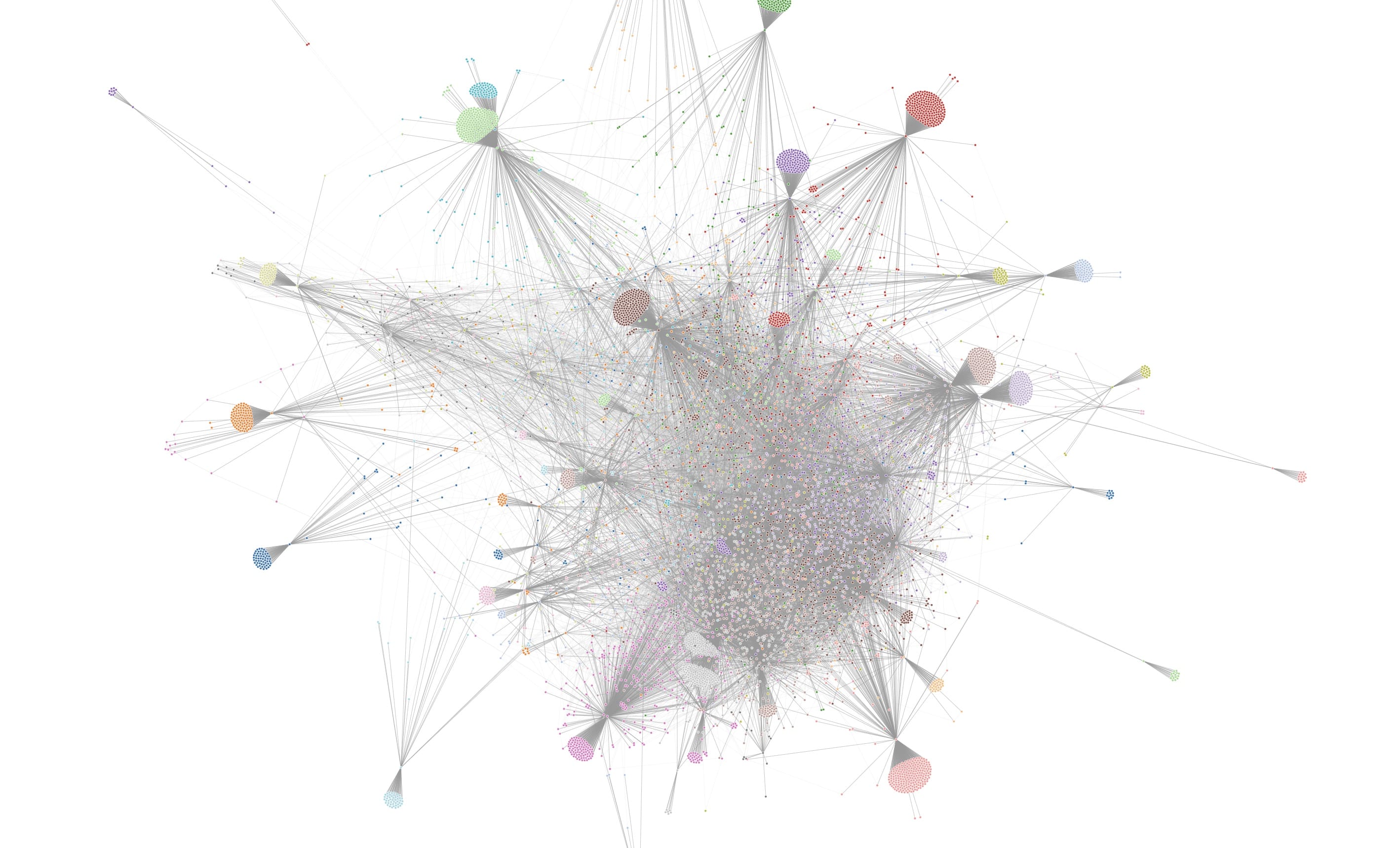

But I don’t have any plans to stop writing (although I have been writing less over the years, and posting less photography and technical articles). The site is still useful to me as a gigantic notebook, peaking at slightly over 9000 (ha!) individual pages:

Most people never notice (since I filter most updates from my RSS feeds), but I keep adding links and resources to some of my dev pages (like the Python, Rust and Go ones), often multiple times a week.

And I also don’t have plans for major changes in the way it runs, either. It’s come a long way from being hosted behind a DSL line from the NSLU2 in my closet or an i386 running PHP in Windows (yes, you read that right, but I had to make creative use of what I could spare–it’s been hosted in multiple machines, colos and various cloud providers and gone through several architecture, CDN and runtime changes, and I will keep using it as a guinea pig as time permits.

Design-wise, I plan to keep it as a no-frills, minimal static site, but I do miss the original design (a variation of Michael Heilemann’s Kubrick), partly because it was so much in tune with the physicality of Macs of that age, and partly because it was such a great fit for my photography hobby and I kept adding header images to it.

So to cap this off, I did a little video of most of those header images (I had some 300 of them), and leave you to enjoy it:

I’m not promising the site will last another 20 years (sometimes it’s hard to believe I might be around that long), but I’d like to see them happen.

Thanks for coming along for the ride.

Oh, and did I mention that Apple still does not have an official Apple store in Portugal?

-

my Switcher’s Guide would occasionally have thousands of page views a day, a kind of popularity that took some time to wane and spurred me to relentlessly optimize the site. ↩︎

-

This post was mostly written on an iPad, using VSCode inside Blink shell, and with a few quick swipes using

vimnow and then across various machines–which should give you an idea of how often I switch computing environments. ↩︎