OK, this was an intense few days, for sure. I ended up going down around a dozen different rabbit holes and staying up until 3AM doing all sorts of debatably fun things, but here’s the most notable successes and failures.

Incidentally, this post is a great example of why I think AI is a force multiplier when you have the ability and skills to guide it–without GitHub Copilot, I wouldn’t have even begun to scratch any of the more complex things I put together this week.

And scratching I did, very much so. I went through many of my long-term itches and annoyances regarding a bunch of semi-random things I care about, and tackled the most I could fit into the week for sheer fun.

The Unreasonable Popularity of PhotosExport

To begin with, I decided to modernize and (re)automate one of my usual year-end chores once and for all: Curating and filing the year’s photos off my iPhone and saving a snapshot to my NAS.

This has become a volume enterprise now that we have the new iCloud Shared Photo library, to which I and my wife and kids save photos we want to keep/share.

Even without Apple (finally) blessing us with that feature, exporting all of my photos off iCloud and saving them to my NAS with a coherent, reproducible naming convention and include all the original formats has been a recurring issue for many years. It goes all the way back to one of the very first HOWTOs on this site, and I keep revisiting it.

Of course I built a set of scripts to help over the years, but guess what, macOS Tahoe and Apple’s utter neglect of automation have made those untenable (there isn’t even a decent Shortcuts action to export photos).

So this year I did it again, but using Swift (in the perhaps naïve belief that Apple won’t break it) and the result was PhotosExport–a typically “me” tool in the sense that it does the absolutely bare minimum I need in the simplest possible way with the least amount of dependencies and frills.

It’s not even a proper Mac app, just a highly opinionated CLI tool that you can build and run yourself without any significant tooling except for the Xcode CLI tools.

And since I was a bit annoyed at Apple’s cloud features (including the fact that I can’t get at any of the interesting extra metadata and tagging), I decided to adorn the README with a suitable picture:

I didn’t think anyone else would be interested in it, but somehow it took off and got 160+ GitHub stars and 5 forks in a couple of days, as well as quite a bit of direct feedback–including from people who see it as a way to migrate off iCloud/Apple altogether.

Classic Mac Pedal To The Metal

In parallel, I proceeded to bite off more than I could chew in various other fronts.

For instance (and this is one of the failures), I had a go at building on my drawterm port and trying to get Acme-SAC to work, but I would have to port Inferno and dis to 64-bit and that was a bit too much–but I did take a stab at it.

Somehow, that led me to something that consumed me way into the wee hours last night–trying to improve Basilisk II performance on low-end ARM hardware.

I could have spent these quiet days installing the new LCD display I got for the Maclock or finishing the design for a new case for my SE/30 replica.1 Instead, I decided to see if I could:

- Build a “baremetal” version that leveraged the Pi’s

opengles2support (which I sort of already had, since my manual builds used the frame buffer directly) - Add an ARM JIT engine.

And then I thought–heck, why not make it two engines? (cue the “five blades” meme)

After all, I have had to run Basilisk II on fairly low-end 32-bit hardware in the past, and the 32-bit builds are actually faster on 64-bit Raspbian for some things, so I took the partial ARM32 JIT engine from ARAnyM and paired it with the Unicorn Engine. It lacks FPU emulation, but seems to be able to JIT chunks of 68k code OK, if disappointingly slowly.

And… 24 hours later, I have experimental releases of both 32 and 64-bit JIT builds.

Only the 32-bit one actually boots for now and seems snappy, but has some SDL and screen corruption issues I’m trying to figure out (this is one of the most extreme cases, it’s a little better now):

…but I have just spent one of the most fun late nights in years fighting with raw, unfettered low-level C, and even if I have to thank AI for a lot of the guidance and exploratory code, it was totally worth it.

Pro Tip: Worktree support in VS Code is a complete lifesaver. Also, consider building specialized, local MCP servers to help you with common tasks.

The only thing that bugs me is that this took away nearly all of the time budget I had allocated to either finish the 3D model for a new case or opening the Maclock and start figuring out how to mount the hardware inside (although I have started collecting references for classic Mac case recreations).

Node-RED Redemption

But Basilisk II wasn’t the only thing I decided to reboot. For a couple of weeks now, I have been quietly poking at a completely rebooted version of the original Node-RED dashboard module, rebuilt from the ground up using Preact and Apache ECharts.

I started doing it almost exclusively because of this Dashboard 2.0 issue, which has been open since 2024, and it sort of ballooned from there.

Truth be told, I’m also doing it because I think that the original dashboard is a much better fit for my needs in general, especially given the way it is deeply integrated into Node-RED.

And I had a bunch of ulterior motives:

- I wanted to do a sizable project in TypeScript, and I might as well do some refactoring work to get started since I can compare it to the original.

- I have always wanted to get rid of the Angular layer and have a single, unified modern CSS file that didn’t require additional tooling to maintain.

- I really need a dashboard that works the way the old one did. And I am 400% positive I am not the only one.

I’ve also always been a fan of the utterly no-frills, very lightweight take Preact has on handling components, so I guess things just clicked the moment I started using bun heavily.

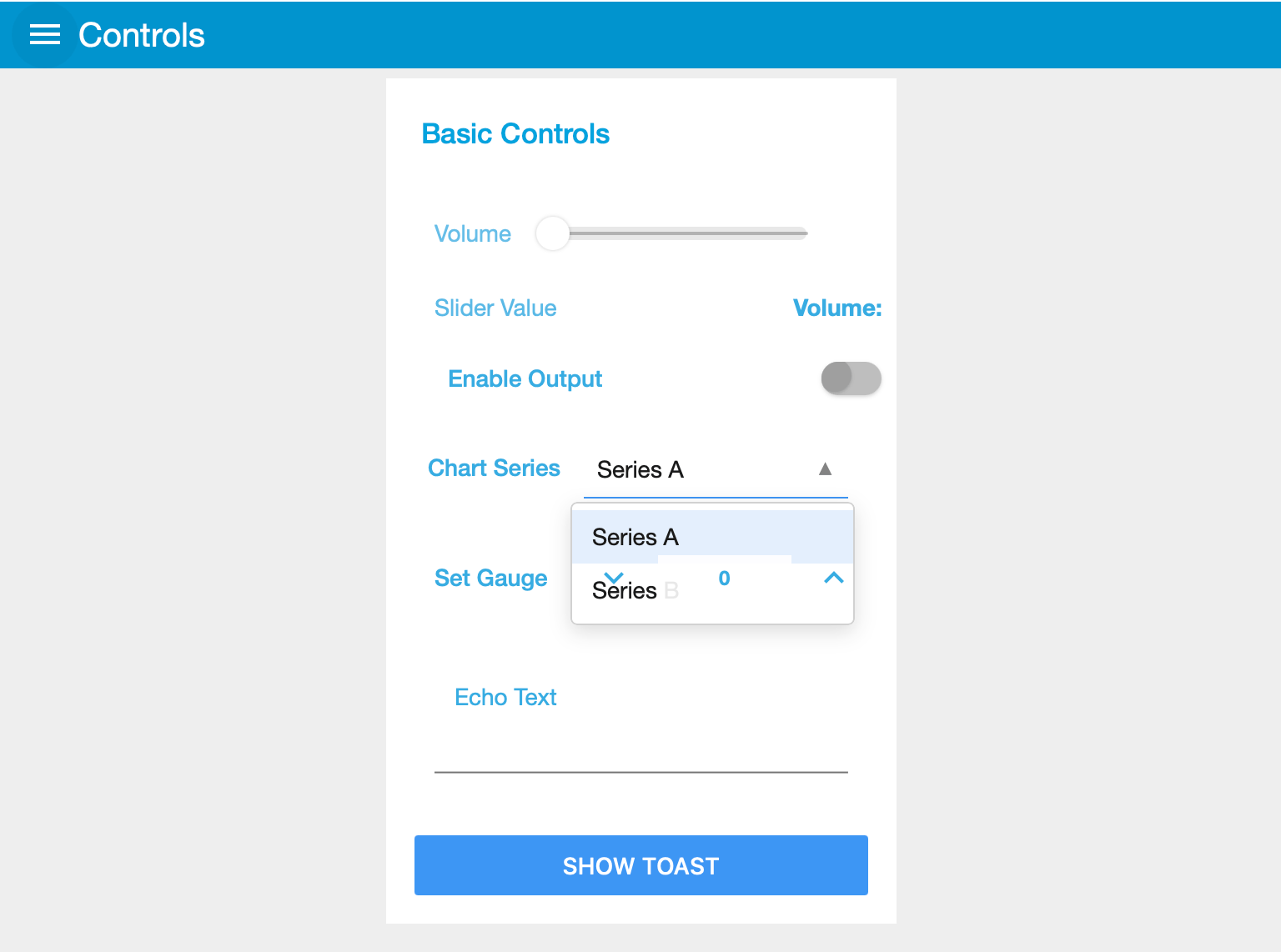

Right now I have most of the components “working”, although there are a few differences and nearly all the charts are buggy (I am still mostly refactoring and generating tests to ensure UX and behavior compatibility), but it is… usable in a way:

Once I start daily driving it I’ll likely do the unthinkable and end up maintaining an npm package.

As a nice bonus, I added a bunch of locales that had never been supported, bringing the total up to 12:

- 🇺🇸 en-US (English)

- 🇩🇪 de (German)

- 🇪🇸 es-es (Spanish)

- 🇫🇷 fr-fr (French)

- 🇮🇹 it-it (Italian)

- 🇯🇵 ja (Japanese)

- 🇵🇹 pt-pt (Portuguese - Portugal)

- 🇧🇷 pt-br (Portuguese - Brazil)

- 🇨🇳 zh-cn (Simplified Chinese)

- 🇹🇼 zh-tw (Traditional Chinese)

- 🇰🇷 ko (Korean)

- 🇷🇺 ru (Russian)

Pro Tip: If you’re doing this kind of localization work, build a tool that does random sampling of your locale strings (I’m doing five sets of three - the English base plus two translations), and any LLM ((even the simplest local model) will churn through the mistakes in minutes.

Only Fans, Sorta… Not

After almost a year of poking at ways to keep things cooler in my closet, I finally fixed my TerraMaster’s fan controller (or, rather, found the Linux daemon that could deal with its proprietary PWM controller).

I haven’t pushed any of my scripts or notes to GitHub yet, but they’re going to end up on this repo.

Serendipitously, borg’s CPU fan died sometime this week, and I had to do some open heart surgery on it:

Fortunately, I have spares of this kind of thing lying around, but it led me down another rabbit hole, which is taking another stab at fixing one of my long-time outstanding issues–having comprehensive metrics and alarms (i.e., observability) for all of my hardware (and home automation, and app metrics).

InfluxDB and Telegraf

Proxmox has built-in monitoring for CPU, storage, etc., and I have long stuck to a very simple SQLite-based approach for my home automation temperature and power charts.

But I didn’t have a unified view of a lot of things–including baremetal data like CPU temperatures, fan speeds, etc.–and no real alarms other than a ZFS monitoring script that I put together when I repurposed some old HDDs.

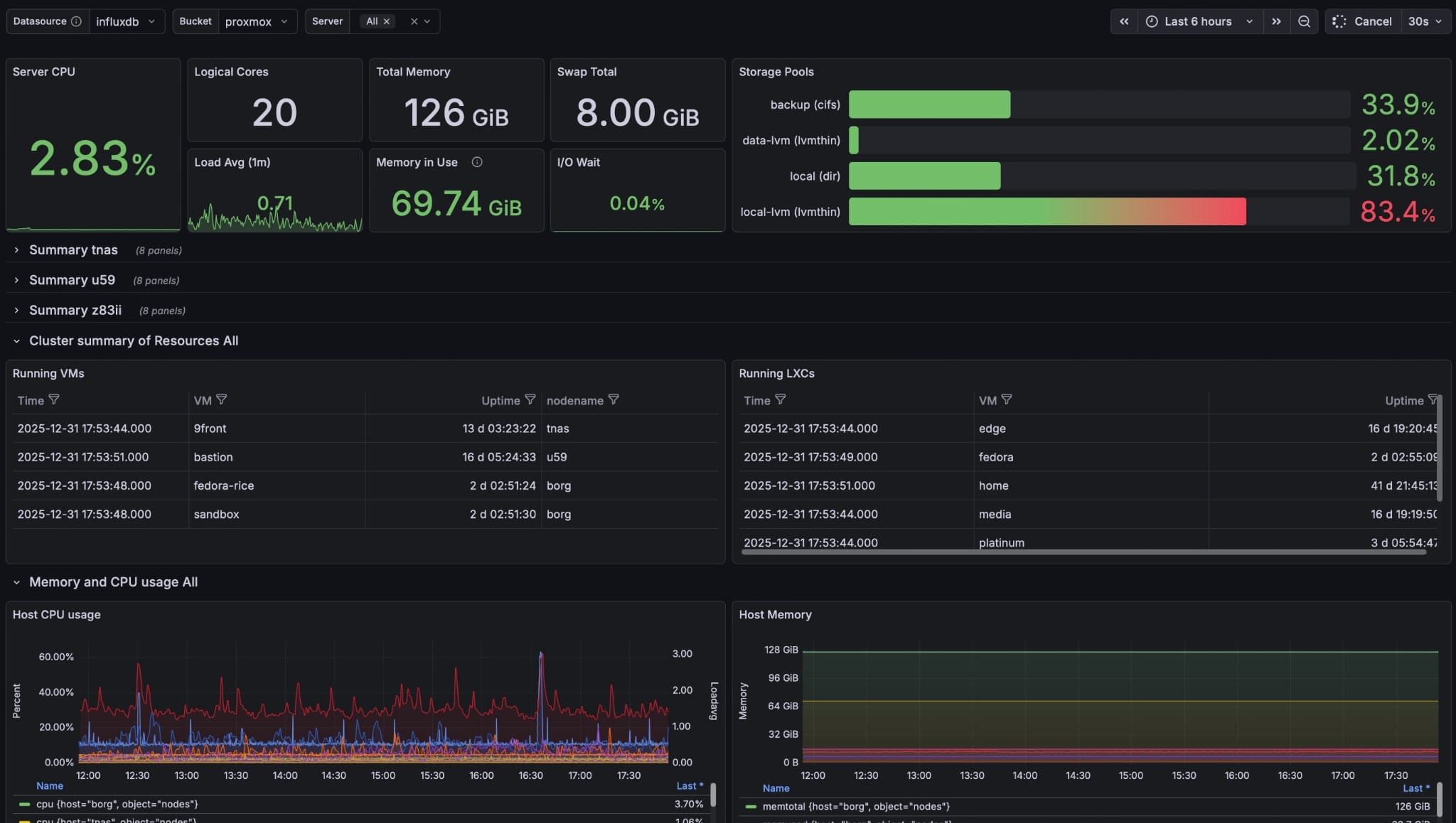

After avoiding it for years I decided to look into InfluxDB, quickly steered away from the “big data style” version 3 rewrite and set up InfluxDB 2.0 on my main NAS.

Pointing Proxmox at it was a very trivial setup from the Datacenter settings–a few clicks and all my nodes, VMs and LXCs were sending metrics to it.

But were they?

Well… not really. The baked-in PVE collector is really picky about settings, and the only way I got it to work was to set up a dedicated proxmox bucket (it refused to send LXC metrics to anything else, which I suspect is a bug).

Pro Tip: Check the Proxmox forums whenever you come across this kind of inconsistency. Sadly, it seems this bug has been around for years and surfaces inconsistently.

Since I absolutely loathe Grafana (it’s overly complicated), I decided to have a go at building dashboards on InfluxDB directly, which… Nope.

So of course I started another project:

I forked steward and am building yet another agentic dashboard creation application that will eventually make it easy for me to create Vega or Giraffe grammars and Flux scripts from just talking to an AI.

Yes, I know this is a trope, but I figured I might as well get on that bandwagon, even if it is too close to what I actually do at work.

This is going to be a slow-burning project, so in the meantime I have already started piping zigbee2mqtt metrics into InfluxDB and I am setting up telegraf on all my physical machines and Docker hosts for both temperature/hardware sensor monitoring and detailed container statistics.

But first, I’m automating the heck out of the roll-out with ground-init to ensure it’s repeatable. Watch that for templates over the next few weeks.

And yes, I eventually caved in and installed Grafana to make do. But I intend to “experience” it again at leisure to see how much simpler I can make my own dashboarding experience. I hate it, but at least it works for now:

The only thing I’m missing now is proper OpenTelemetry-like application performance metrics. Most of my cloud stuff uses Azure Application Insights for metrics, tracing, and exception logging, and even if I might be able to shoehorn part of it into InfluxDB, any suggestions on something I can do about it (and yes, I have Signoz on my shortlist) are welcome.

Update, the day after: Boy, a new year sure changes your perspective. Anyway, I decided to ditch InfluxDB given the artificial limitations in 3.0 and their deprecation of Flux and use Graphite instead, since I just want simple time-series storage and graphing without all the bloat, and a single container gives me everything I need for initial exploration. More on that later as I fine-tune my

telegrafsetups and figure out alterting, which is the one missing piece for me now.

We Have Hologram Microcosm At Home

This one is completely off the wall, but worth mentioning since it’s been on my back-burner for years and I have finally gotten around to it.

In short, the Hologram Electronics Microcosm is a very fancy granular effects pedal that took the synth world by storm a few years ago, and that I have always wanted to play with.

But my music hobby only really happens when I’m happy at work (and that hasn’t happened often of late) plus there is no way I could ever justify spending that much on one, so I have been tinkering with the idea of replicating most of its features in software somehow.

And then I remembered I have a Norns Shield I built a few years ago that can do most of it on paper, and I had a wild idea: I got the Microcosm PDF manuals, rendered them in Markdown, extracted all the feature descriptions and built a very comprehensive, LLM-ready SPEC.md with absolutely everything I could glean from the documentation.

The result of around 30 minutes of feeding that to GitHub Copilot was nanocosm:

…and, even more mind-blowingly, the first effect just worked.

I plugged in my Xsynth, piped the audio through, and it did some of the granular synthesis/looping/reverb I expected. I was hooked, and ended up spending most of last Friday fiddling with it.

Now for the reality check: I have no idea if it actually sounds like the Microcosm. After two days “implementing” all the effects I am constantly coming up against Supercollider issues and UI glitches, and the Norns has a pitiful amount of physical controls when compared to the Microcosm.

Still, I now have an effects toy that is a lot of fun to tinker with–it’s a great Christmas present for myself.

The icing on the cake is that I also ended up building an MCP server for it that is sophisticated enough for me to “show” Copilot what is in the UI and what sliders I’m tweaking so we can refine the Lua part:

Once I can sort out the Supercollider bugs (right now I’m not releasing all of the filters in some UI interactions) and figure out if this is actually releasable. It is, after all, a clean room re-implementation of the Microcosm, and as any synth nerd will tell you, Behringer is still very much in business… but I need to think about it, and if I reach a positive conclusion I will put it up on GitHub as well.

Other Stuff

Finally, there were a few other minor successes/failures:

- I finally got

wispto a usable state (for me), although I have completely failed at removing its recursive dependency on itself (like many LISPs, the compiler was rebuilt with itself, which means it’s a pain to deal with) - I rebuilt my friend Carlos’ RetroArch shaders into a format I can use on my Apple TV

- I did many minor tweaks to

kata, my feed summarizer andguerite.

These had nearly daily fixes, since real life keeps coming up with corner cases–but they were all simple enough to be fixed using toad and the free OpenCode models using nothing but my iPad and a terminal window, so that was great.

But even though I’m quite happy with all of these hacks and this was arguably one of my best grown-up holiday weeks ever, I think I need to go back to dealing with hardware again.

I have been meaning to build my own ZigBee devices, but I also have new single-board computers and mini-PCs to test, so I think I’ll try to switch gears to those for the remaining few days before I go back to work (which is effectively Friday, but I’m looking forward to the weekend).

Thank You

This is likely going to be the last post of the year, so I would very much like to thank:

- Everyone who’s visited or supported this site (and hence a good deal of my hobbies)

- All the vendors who’ve gracefully provided review samples throughout the year (and hence not just provided unique opportunities to gauge the state of various pieces of hardware, but also indirectly helped my private consulting engagements, since all that testing helps me keep my technical skills sharp)

But, most importantly, if you’ve read this far, I wish everyone a very Happy New Year.

May 2026 be a much better year for everyone all around (regardless of whether my predictions pan out or not).

-

I’ve been slowly poking at this since May, after all, and the inflection point was, I think, when I decided to set up my own automated builds of Basilisk II. ↩︎