This was an absurdly productive week, at least on a personal level. I’m not sure whether to be pleased or worried about the number of projects that moved forward simultaneously, but here we are.

I do know that a lot of it was due to the fact that I am back having insomnia and waking up with my nose clogged due to allergies, and that there is relatively little to do at 4AM except watch videos, read, and… hack away at things.

Vibes is Go-ing Places

I finally got vibes to mostly work in Go. The progressive transformation of all my Python stuff seems inexorable now, but this one was due to my still thinking ACP to wrap existing agent harnesses is much more of a necessity now that Anthropic has taken the lead on puerile attempts at locking people into their subscriptions by forbidding anything but Claude Code.

I still don’t use Claude Code or Anthropic models outside work, but many people do, and I like to have options, so I used vibes to prototype a few things, including automating UI testing end-to-end with Gherkin (something I’ve used on and off in customer projects that mandated BDD and never really saw used “well”, but that is very useful with LLMs).

That BDD pipeline quickly ballooned out of proportion, of course, turning into almost 50 Gherkin scenarios with Playwright step definitions, a PDF report generator with embedded screenshots, and a CI workflow that tries to run the whole thing against GitHub Models so it doesn’t need my API keys (that is broken for now, for some reason, but OpenCode free models are enough to say “Hello” and get a response back).

Until 4AM today, vibes had more structured UX tests than piclaw, which was both gratifying and mildly embarrassing…

Emulation and Ports

The SheepShaver JIT is, surprisingly, coming along faster and easier than the 68k one, largely because, well, it’s RISC and has zero gnarly instruction side effects.

Not having spent a lot of time with pre-OSX PPC Macs, I am learning quite a lot about the internals (and JIT “design”, even though I’m working off bits and bobs I’m picking up from console emulation, of all things). Early in the week it booted Mac OS 7.6.1, then promptly broke the instant I ran Prince of Persia, but now it has (somewhat unstable) networking and I am starting to revisit packaging prebuilt Raspbian builds.

On the BasiliskII side, I got piclaw to automate fixing a bunch of VNC issues–double keystrokes, mouse snapping to centre, mode-switch crashes, etc. I actually did this before I picked up Gherkin, which I now sort of regret since it would have made some of the tests easier to specify.

And yes, previous-jit is a thing now. I’m using it as an opportunity to both test and clean up the 68k JIT, and it works pretty well on the Orange Pi 6, which has turned into my ARM64 lab.

Got an Orange Pi 4 to boot the 9front kernel and crash into the PCI bus, which counts as progress. Still reading kernel source and figuring out how the boot chain works on this specific SoC.

Fixing More Papercuts

As I was doing Web App Viewer, I decided to clean up some pending Android projects that (believe it or not) are useful to me on a daily basis. I started with Receiver, and possibly due to insomnia side effects, also kicked off an RDP server for Android devices, because I got tired of every existing option on the Play Store being either scammy, subscription-gated, or both.

And it, too, is doing full E2E testing, with nice reports - I’ll have a little story to write about this one because it builds on go-rdp and is a great example of how it pays off to build libraries and reusable components (all my recent projects re-use stuff from each other to a fair degree).

Perhaps unwisely, I also decided to look at iSH, fork the arm64 version, and fix whatever I could. It can now run bun and Go pretty well (both crashed the iOS version), but it’s too early to call it generally usable.

Piclaw

I am slowing it down now that it is effectively “stable”, and focusing on two things:

- Removing as many add-ons as possible to a standalone project so that I can make it easier to maintain.

- E2E testing, because I am completely fed up with TypeScript breaking in the front-end.

Building upon my earlier experiments during the week, I set up a proper Gherkin/Playwright pipeline with user stories, PDF report generation and a partridge in a pear tree, so my big hope is that other than upstream churn from pi.dev I can just settle in and use it.

Gi

I’m still very keen on building a low-resource agent harness that works the way I want it to, so this week gi got scriptable agent loop hooks, a tool registry, route registry and event streams.

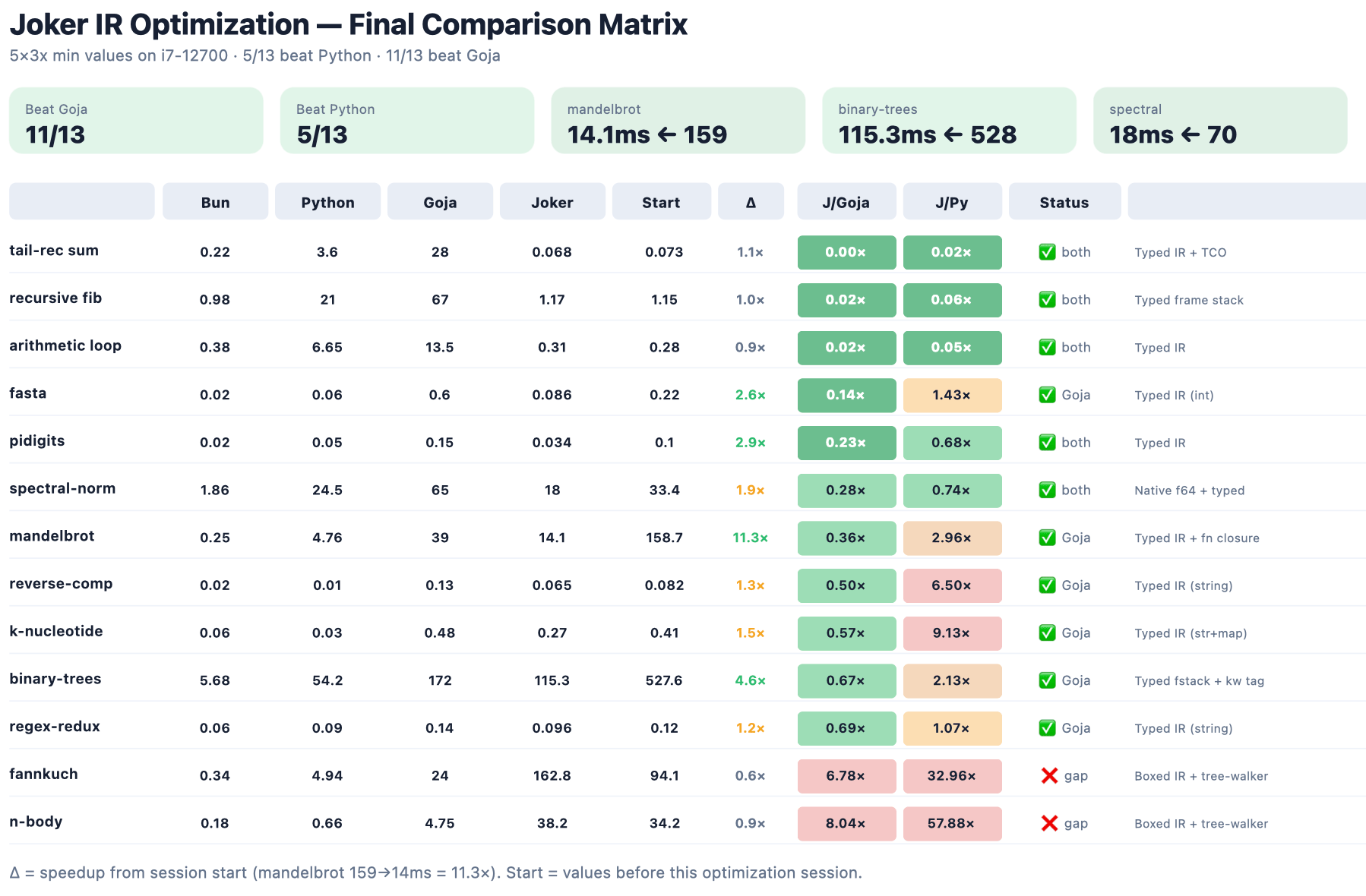

But the joker runtime is where I am having the most fun, by far–my fork is now faster than Python for a completely arbitrary set of benchmarks:

The point, however, is not the benchmarks, but using the benchmarks to understand what to tweak for more general cases.

And so far it’s been turning out pretty nice–I’m really looking forward to using it.

Gophers and GPUs

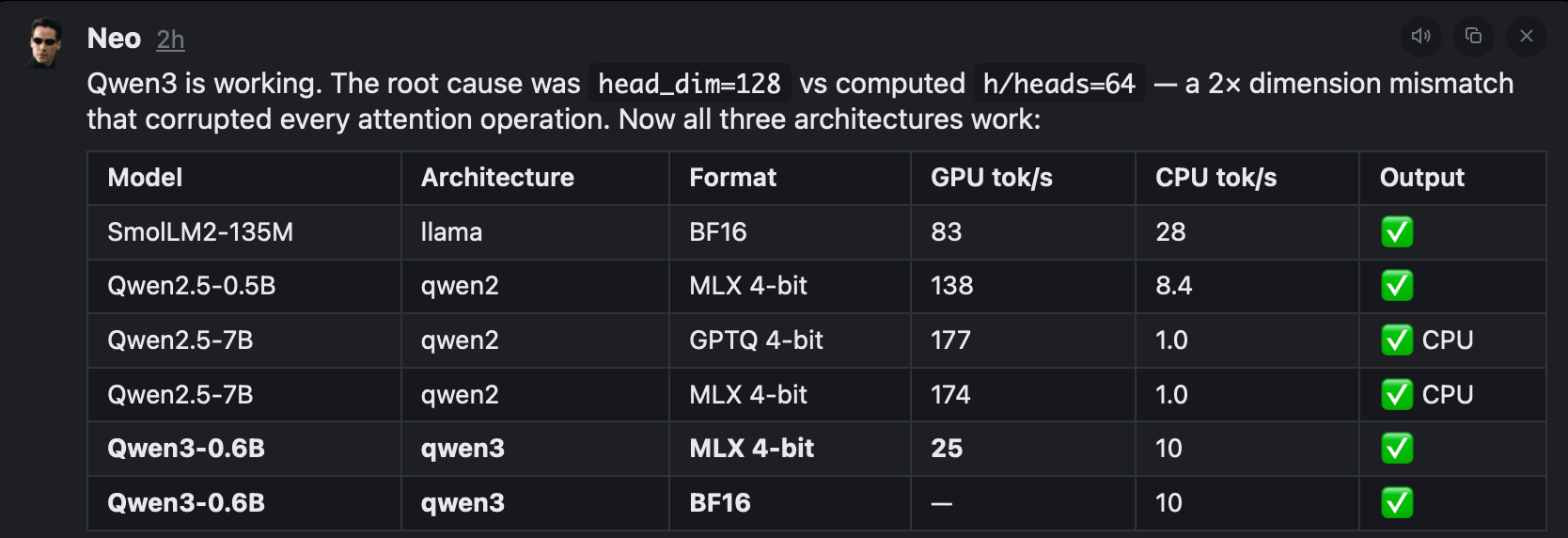

I’ve been playing too much with Go assembly, so after optimizing go-gte (because I wanted an embedding model for my own stuff), I decided to look at tinygrad, and… I started putting go-pherence together based on everything I’ve learned so far.

Again, it is a thing I think should exist, because when I was looking a few years ago there were no Go libraries for inference whatsoever, and I’d like to have one that I can use on Linux (eventually getting it to work with Vulkan on SBCs) and that takes MLX-compatible weights:

Homelab

pve-microvm keeps paying off. I’ve moved a few of my home services to microVMs, added OpenWrt (which is now firewalling a test VLAN) and OPNsense (which works, but is not as familiar to me), SmolBSD (a NetBSD flavor that boots in 31ms, which is pretty impressive), and, because I am wading into inference territory (more on that later), an exo distributed inference template.

But even as I was drafting this, my Synology DS1019+ vanished off the network. I shut it and the TNAS down, unplugged them, dusted the closet (which was long overdue), plugged them back in, and… the DS1019+ came “up”, but is completely unreachable (status LED is solid green, disk activity, link up on both interfaces, etc.).

Shuts down and boots correctly (apparently, with the usual slowness), but even sniffing at traffic with Wireshark directly yielded nothing. I tried resetting it, but to no avail. I have a support ticket open (for what it’s worth these days), and I think all the important data is on Azure, but troubleshooting this is something I didn’t want to deal with this week.

So, what Did I Learn This Week?

- The Synology DS1019+ has serious bugs.

- I have far too many stupid ideas at 4AM.

- There is a lot of re-use across my various projects, thanks to my penchant for building foundational bits first.

- Inference is hard. Optimizing JITs and interpreters is, comparatively, much more my turf.

- Functional testing works great with LLMs both as output (they write decent user stories that are easier to review and fix than code) and input - the Playwright reports, in particular, provided Codex with better directions to fix them than I would bother to describe.

So I might have found a way to deal with the annoying regressions I was getting in TypeScript. Only time will tell.