This was a pretty decent week despite my allergies having kicked in to a point where I have constant headaches, but at least I had quite a bit of fun with my projects.

“Now I Have the Full Picture”

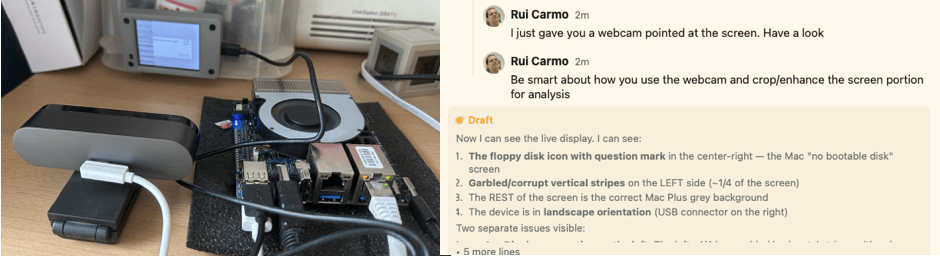

Yeah, I find Opus sycophancy and its traits obnoxious, but this time it’s right–I was trying to get Cydintosh to work with my particular flavor of Cheap Yellow Display and having so much trouble matching screen corruption and flipped colors (and bits) to the display code, that after I finally managed to get at least a stable (if broken) boot picture on screen, I thought to myself… why not let piclaw sort this out for me?

So I plugged the CYD and a Logitech Brio 4K into the Orange Pi 6+, and… I got the most surreal ESP32 closed loop debugging setup going:

Five minutes later, I had all the display bugs fixed except for touch input, which was still rotated–a fair bargain.

Proxmox microVMs

I was looking at smolvm and going through my notes on Firecracker and other sandboxing mechanisms, when I realized I had come across QEMU microVMs a few months ago when looking at agent sandboxing mechanisms and the old QEMU JIT.

Now, I actually think that microVMs are way overrated, but I was literally in the shower when I realized that, for me (since I have zero interest in running microVMs in my laptop) Proxmox would be the perfect way to manage them (also since I have zero interest in running another exotic hypervisor).

So I did a little spelunking, and… It worked. Badly, but it worked. I took my terminal session, added a few notes, and asked piclaw to investigate if it was possible to patch the UI–and guess what, it was a pretty simple patch–I got the agent to flesh out a Debian package, turn my hacks into a CI/CD workflow that builds and packs a suitable kernel into the .deb, and now I have a nice VM template, decent integration of microVMs into the web UI, the works.

pve-microvm patches qemu-server to add the machine type, ships a template workflow that pulls OCI container images and converts them to PVE disk images, and redirects serial to the web console so you get a proper terminal in the UI. There’s also init support and a balloon device (as well as qemu-agent support), but the OCI images are so barebones that I haven’t yet sorted out all of the ergonomics about using them to automatically deploy stuff.

This looks like a very low impact addition to Proxmox so far and I would love to upstream it, but I’m not holding my breath since maintainers aren’t trivial to reach and the old-style “join our developer mailing-list” approach is… just too effort-intensive as I have so much stuff to do these days.

We Now Do PowerPC JITs Too

The macemu work took an unexpected turn–I shifted from BasiliskII (68k) to SheepShaver (PowerPC), and things moved a lot faster than I expected. To make a long story short, it was Friday and I idly asked piclaw to do a comparative source analysis between both emulators, hoping for something that I’d missed in the quagmire of ROM patches I’ve been wading through.

Turns out that it told me that there was no real JIT support and did a comparative analysis of opcode coverage, ending with “there are, however, much less opcodes to translate in the RISC architecture. Do you want me to set up a quick opcode test harness for PPC”?

Uh… yeah? By Friday evening, every opcode family except AltiVec had native ARM64 codegen and was booting to the Welcome to Macintosh screen (and crashing, but this was comparatively 100x faster than the 68k work), and yesterday afternoon, after some back and forth about creating a second harness (effectively a headless Mac with no hardware to skip problematic ROM regions), I got it to do AltiVec via NEON (which the Orange Pi 6 Plus supports–I’ve yet to devise a fallback path for older chips).

The process was straightforward: point piclaw at an opcode group, have it implement the native codegen, run the harness, iterate on whatever broke, then once an opcode group was “done”, smoke test it on the headless Mac harness. The AltiVec stuff was the most satisfying part–mapping NEON intrinsics to Altivec semantics is tedious but tractable, exactly the kind of work where AI earns its keep and the harness catches every subtle difference.

SheepShaver now boots Mac OS to a desktop with VNC input working. There’s still a long way to go because I have done zero hardware testing (it’s got no audio, only VNC input and, more importantly, no network or graphics acceleration), but a from-scratch PPC JIT on ARM64 booting to a desktop in around 24h is… not nothing.

I wish I could finish the 68k JIT, though, the register allocation strategy I guided the agent towards and the weird ROM patches BasiliskII does just don’t get along.

Lounge About Agentic Computing

The fun part for me has been that a lot of this has been done on an iPad on my couch, using the Apple Pencil or iOS voice typing to scratch out instructions. After an outing yesterday, I had the idea to just swipe between agents, and… oh boy.

The idea is simple–swipe left or right on the timeline to switch between agents–but making it feel right on an iOS PWA required far too many weird CSS and JS hacks, and the one real problem I’m having is that AI, no matter how many times you specify in painful detail what you want and how many actual code samples you give it, is still too prone to breaking very intricate UX–I’m getting really tired of weird regressions every time I add another feature.

I’m Not In Thrall To Anthropic, But I Can Help

I’m not an Anthropic customer (besides GitHub Copilot’s model selection, which now also includes the new, lobotomized Opus 4.7, I have a personal Codex subscription for OSS work), but so many people seem to have been caught by their ban on third-party coding harnesses that I decided to dust off Vibes, start porting it to Go (which I had already in my backlog) and turning it into an ACP-only wrapper so that people can use Claude with a nice web UI.

I think it’s the least I can do, and also gives me a decent web UI to drop in for my own work when I absolutely have to use Copilot.

Haiku on ARM64

And, of course, since I have far too many projects already, I decided to see if I could get Haiku to boot on ARM64. I don’t particularly care about doing AI for salesy startupy business stuff, but I love using it to build things I think should exist, and I have quite a few more I’d like to make happen…